llms.txt vs robots.txt: What's the Difference?

Everyone knows robots.txt, but what is llms.txt and why do you need both? A clear comparison for website owners.

As a website owner, you probably already know robots.txt. This file tells search engines which pages they may and may not visit. But now there is a new file on the scene: llms.txt. What is the difference? And do you need both? In this article, we explain it clearly.

What does robots.txt do?

robots.txt has existed since 1994 and is one of the oldest web standards. The file sits in the root directory of your website and contains instructions for web crawlers. You can use it to indicate which folders or pages a search engine may not visit. It is a traffic controller for bots.

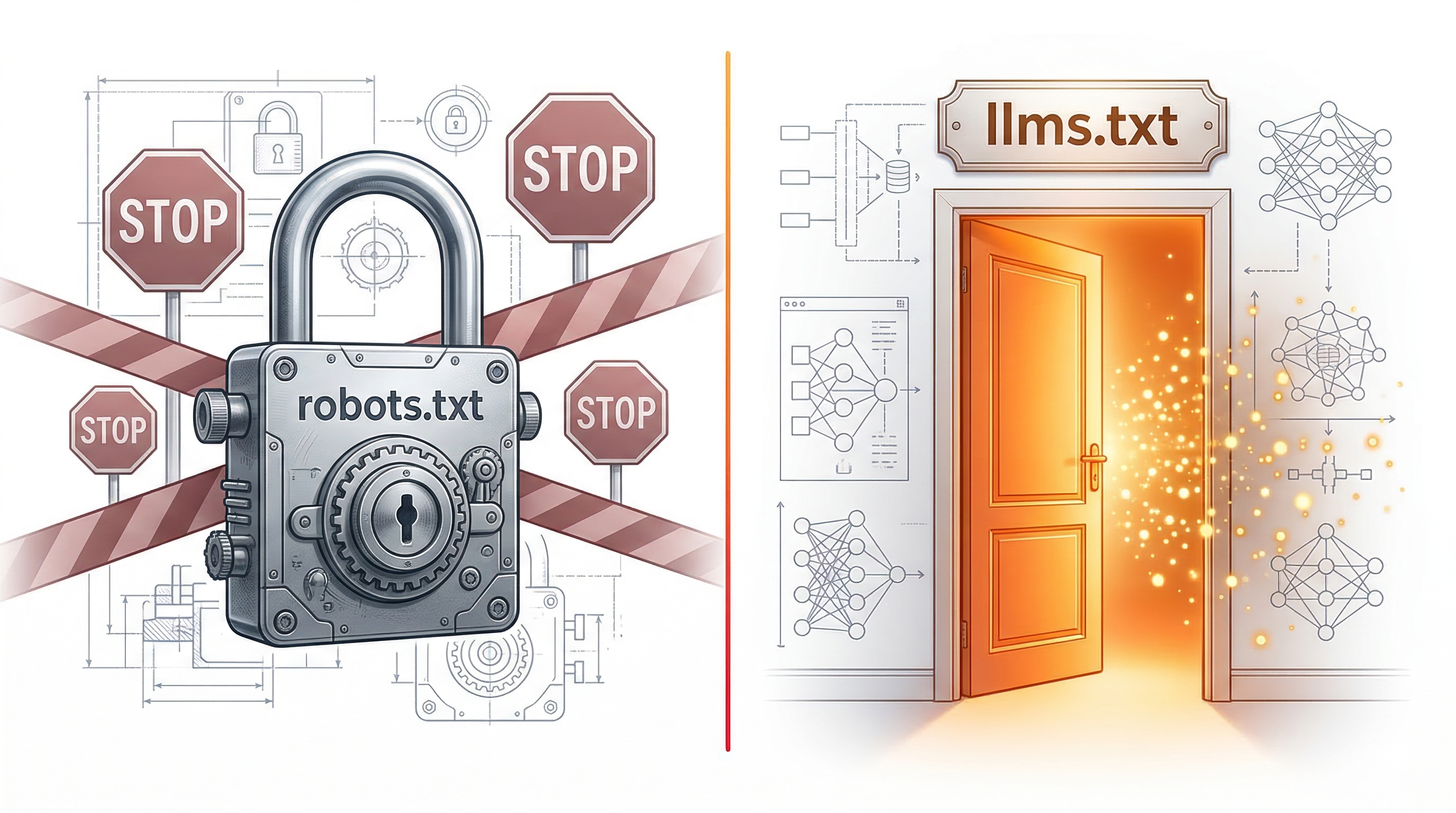

Important: robots.txt only tells crawlers where they may not go. It tells them nothing about who you are, what your business does, or what products you sell. It is purely a technical access control system, not an information source.

What does llms.txt do?

llms.txt has a fundamentally different purpose. Instead of restricting access, it provides information. It is a structured Markdown file that tells AI systems what your business does, what services you offer, how customers can reach you, and which pages are most important.

Where robots.txt says 'do not go here,' llms.txt says 'this is who we are and this is what we do.' It is designed to inform AI models quickly and accurately, without them having to sift through your entire website.

Technical differences

robots.txt uses its own syntax with User-agent, Allow, and Disallow rules. It is intended for traditional web crawlers that index pages. llms.txt, on the other hand, uses Markdown, the markup language also used for README files on GitHub. This makes it readable by both humans and AI.

Another difference is the content. robots.txt contains technical instructions and URL patterns. llms.txt contains substantive information: company name, description, contact details, products, services, and links to important pages. It is more of a business card than a traffic sign.

Do you need both?

Yes, both files have their own function and complement each other. robots.txt protects parts of your website that do not need to be indexed (admin pages, internal tools, test environments). llms.txt ensures AI understands what your business does and can recommend you to users.

Think of it this way: robots.txt is the lock on your back door, llms.txt is the signboard on your front door. You need both for a complete online presence that works for both traditional search engines and the new generation of AI tools.

Getting started

Most websites already have a robots.txt file (your web host or CMS often creates this automatically). Check it by going to yourdomain.com/robots.txt in your browser. For llms.txt, chances are you do not have this yet, as it is a newer standard.

The good news: adding an llms.txt file is simple and requires little effort. At llms-txt.nl, we generate a professional file based on your website content. Within minutes, you have both files that together ensure your website is optimally discoverable for both Google and AI.

llms-txt.nl editorial team

Articles about AI visibility and llms.txt for Dutch businesses.